|

|

Here's a short video overviewing the work our lab has done over the past 20+ years. |

|

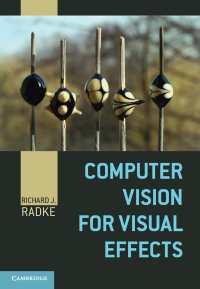

I wrote a textbook called Computer Vision for Visual Effects, which was published by Cambridge University Press in Fall 2012. The book describes classical computer vision algorithms used on a regular basis in Hollywood (such as blue-screen matting, structure from motion, optical flow, and feature tracking) and exciting recent developments that form the basis for future effects (such as natural image matting, multi-image compositing, image retargeting, and view synthesis). It also discusses the technologies behind motion capture and three-dimensional data acquisition. More than 200 original images demonstrating principles, algorithms, and results, along with in-depth interviews with Hollywood visual effects artists, tie the mathematical concepts to real-world filmmaking. Check it out, along with the accompanying set of annotated videos. |

|

Human-Scale, Occupant-Aware EnvironmentsOne of my current major research projects is the design of human-scale, occupant-aware environments, such as the Collaborative-Research Augmented Immersive Virtual Environment Laboratory (CRAIVE-Lab). This work proceeds in collaboration with an exciting new initiative between Rensselaer and IBM called CISL: the Cognitive and Immersive Systems Laboratory. I'm particularly interested in cognitive environments that can facilitate group meeting facilitation, such as keeping track of an agenda, remembering participants and their responsibilities, and helping groups make decisions. This video envisions our goals for the research, which was supported by an NSF award from the PFI:BIC program. This paper is a good example of our research on group meeting understanding. |

|

Video Analytics in Camera NetworksI am particularly interested in computer vision problems that occur in large networks of cameras dispersed throughout an environment. Many years ago, an NSF CAREER award supported my lab's investigation into distributed solutions for determining visual overlap and camera calibration in large dynamic camera networks. These days, my main interest in this area is the design of video analytics algorithms applied to network camera images, like you might find at an airport. We're particularly interested in problems involving counterflow and re-identification. This video gives a brief overview. Our research in this area is currently supported by ALERT, the DHS Center of Excellence on Explosives Detection, Mitigation and Response, and SENTRY, the DHS Center of Excellence on Soft-Target Engineering to Neutralize the Threat Reality. |

|

Smart LightingI'm the Deputy Director and lead the Controls Thrust in the NSF Engineering Research Center for Lighting Enabled Systems and Applications (LESA), a multi-university effort headquartered at RPI. Our group generally studies how the input from a distributed network of multimodal sensors can drive advanced control algorithms and color-tunable LED lights to achieve a desired light field in a room. In particular, my group studies how time-of-flight sensors mounted in the ceiling of a smart room can locate occupants in real time, and how graphical simulation can be used to help pre-visualize and tune the parameters of lighting control algorithms before a system is installed. Much of this research occurs in our advanced Smart Conference Room. |

|

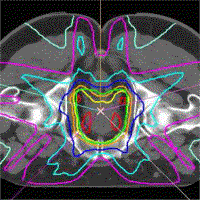

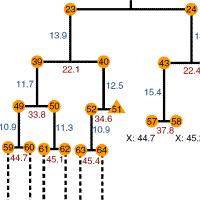

Computer Vision, Machine Learning, and Optimization for IMRTThrough my affiliation with CenSSIS, I supported several undergraduate and graduate projects in biomedical image processing. I am particularly interested in computer vision and machine learning problems related to intensity modulated radiotherapy (IMRT), an exciting new technology for cancer treatment. One project involves developing computer vision algorithms to aid in the automatic segmentation of organs from 3-D CT scans acquired immediately prior to radiation treatment. A second project, supported by an NIH R01 award, investigated the relationship between a patient's body/organ geometry and the multiple radiation beams that are used to treat their cancer. Our algorithm, called Reduced Order Constrained Optimization, or ROCO, creates clinically acceptable IMRT plans in a matter of minutes for several treatment sites, including the prostate, lung, and nasopharynx. This work proceeded in close collaboration with medical physicists at Memorial Sloan-Kettering Cancer Center. |

|

Integrating LiDAR and Digital ImagesI am very interested in LiDAR (Light Detection and Ranging), a laser range scanning technology that allows us to acquire detailed 3D models of real-world environments. Our research in this area includes keypoint detection, registration, integration of range and visual imagery, and probabilistic object detection, and was sponsored by the US Army Intelligence and Security Command and DARPA. |

|

Change Detection and UnderstandingI am interested in change detection as well as change understanding in image and video sequences. Many years ago, we undertook a comprehensive survey of pixel-level change detection algorithms. We are more broadly interested in leveraging pixel-level change detection algorithms, along with domain-specific models for objects and behaviors of interest, to produce semantic change understanding algorithms that can help interpret and annotate image sequences the same way an expert observer would. To date, we have demonstrated this capability in the context of biomedical image sequences (e.g. time-lapse video of neurons and stem cells) to quickly and accurately summarize megabytes of image sequence data. |

Biography

I joined the Electrical, Computer, and Systems Engineering department at Rensselaer Polytechnic Institute in August, 2001, where I am now a Full Professor. I have a dual B.A. degree in math and computational and applied math from Rice University, an M.A. in computational and applied math from Rice University, and M.A. and Ph.D. degrees in electrical engineering from Princeton University. I was an intern at the Mathworks, developing numerical linear algebra and signal processing routines. During my Ph.D. I investigated several estimation problems in digital video, including the efficient estimation of projective transformations and the synthesis of photorealistic "virtual video", in collaboration with IBM's Tokyo Research Laboratory.

My current research interests involve computer vision problems related to human-scale, occupant-aware environments, such as person tracking and reidentification with cameras and range sensors. I am the Deputy Director of the NSF Engineering Research Center for Lighting Enabled Systems and Applications (LESA), and am affiliated with the DHS Center of Excellence on Explosives Detection, Mitigation and Response (ALERT), the DHS Center of Excellence on Soft-Target Engineering to Neutralize the Threat Reality (SENTRY), Rensselaer's Center for Automation Technologies and Systems (CATS), Rensselaer's Cognitive and Immersive Systems Laboratory (CISL), and Rensselaer's Experimental Media and Performing Arts Center (EMPAC). I received an NSF CAREER award in March 2003 and was a member of the 2007 DARPA Computer Science Study Group. I am a Senior Member of the IEEE was a Senior Area Editor of IEEE Transactions on Image Processing. In Fall 2012, Cambridge University Press published my textbook Computer Vision for Visual Effects.

Here is a brief (probably out of date) Curriculum Vita.

Graduate Students

Current

Mohaiminul Al-Nahian, Ph.D.

Zhuoxu Duan, Ph.D.

Hao Lu, Ph.D.

Zhengye Yang, Ph.D.

Graduated

Omar Al-Kofahi, Ph.D. (2005, co-advised with Badri Roysam; now with Koftech)

Eric Ameres, M.S. (2011, now with RPI Dept. of Cognitive Science)

Srinivas Andra, M.S. (2003)

Shaimaa Bakr, M.S. (2014, now in Ph.D. program at Stanford)

Jacob Becker, M.S. (2009, co-advised with Chuck Stewart; now with Kitware)

Indrani Bhattacharya, Ph.D. (2019, now postdoc at Stanford)

Andrew Calcutt, M.S. (2010, now with the MathWorks, Natick, MA)

Siqi Chen, Ph.D. (2011, now with Netflix)

Richard Chen, M.S. (2017)

Zhaolin Cheng, M.S. (2006, now with Captira Analytics)

Anil Cheriyadat, Ph.D. (2009, now with sturfee)

Haeyong Chung, M.S. (2005, now with the University of Alabama, Huntsville)

Dhanya Devarajan, Ph.D. (2006, now with sturfee)

David Doria, Ph.D. (2012, now with Optimus Ride)

Ashraful Islam, Ph.D. (2022, now with NVIDIA)

Yongwon Jeong, Ph.D. (2006; now with Pusan National University, South Korea)

Li Jia, Ph.D. (2014, now with Apple Computer, Beijing, China)

Devavrat Jivani, Ph.D. (2020, now with NODAR)

Srikrishna Karanam, Ph.D. (2017, now with United Imaging Intelligence, Cambridge, MA)

Dan Kruse, Ph.D. (2016, co-advised with John Wen, now with SRI International, Menlo Park, CA)

Yang (Austin) Li, Ph.D. (2015, now with Facebook)

Chao Ling, M.S. (2006, co-advised with Paul Schoch)

Renzhi Lu, Ph.D. (2007, now with DW Partners, NYC)

Linda Rivera, Ph.D. (2013, now with United Technologies, Brooklyn, NY.)

Gyanendra Sharma, Ph.D. (2019, now postdoc at Northeastern)

Eric Smith, Ph.D. (2011, co-advised with Chuck Stewart; now with Kitware)

Ziyan Wu, Ph.D. (2014, now with United Imaging Intelligence, Cambridge MA)

Lingyu Zhang, Ph.D. (2021, now with Samsung)

Meng Zheng, Ph.D. (2020, now with United Imaging Intelligence, Cambridge MA)

For Prospective Students

I receive many e-mails from prospective students asking me if I am hiring students into my group or if I will consider their applications based on an attached resume. I unfortunately cannot respond to each of these individually. You should know that I do not make decisions regarding admission or financial aid, and I only consider prospective students who have gone through the formal application process to our department. If you refer to me in your statement of purpose as someone with whom you are interested in working, I will review your application for a possible fit with my group. I hire students with strong image processing and computer vision backgrounds; if your background is in computer hardware or networking, I am probably not an appropriate advisor for you. I am much more likely to respond to your e-mail if you show that you are actually well-informed about my research (e.g., have read some of my papers) instead of expressing a generic interest in computer vision.Press

- The work of my research group in the NSF ERC for Lighting Enabled Systems and Applications (LESA) was featured in this video made by the Illumination Engineering Society.

- I collaborate with Prof. John Wen on advanced robotics for manufacturing applications, highlighted in this magazine article.

- We recently opened the RAVE (Rensselaer Augmented and Virtual Environment, which I co-direct with Prof. Jason Hicken. You can learn more about it in this podcast.

- This press release overviews my NSF-funded work on intelligent environments for group meetings.

- The work of my research group and several others in the NSF ERC for Lighting Enabled Systems and Applications (LESA) was featured in this article in the Rensselaer alumni magazine.

- In 2014, I was featured in ASEE Prism's "20 Under 40" article on young engineering faculty (though I'm not that young any more!)

- My research on Intensity Modulated Radiotherapy (the work of Renzhi Lu and Yongwon Jeong) has been mentioned in RSNA News, the Albany Times Union, the Troy Record, and Medical Physics Web.

- My research on camera networks (the work of Zhaolin Cheng and Dhanya Devarajan) was mentioned in the Winter 06 Technology Quarterly supplement to the Economist.

- In my assistant professor days I was featured in an IEEE Spectrum article about tenure-track junior faculty. RPI was nice enough to feature me in this article in the Alumni Magazine.

- I was mentioned in RPI's Inside Rensselaer newsletter for my participation in the DARPA Computer Science Study Group.